The Thin Line Between Safety and Suppression

The push to restrict AI from offering “legal advice basics” or “medical advice” is often framed as a protective measure. But when “responsible AI” becomes a cloak for blanket bans, society risks trading empowerment for paternalistic control. Responsible AI must mean enabling informed access, not shutting down tools. Banning access to legal and medical advice via AI entrenches inequality by restricting knowledge to those who can afford expert help. The debate hinges on whether AI is a threat to expertise or a democratizing force: this article argues firmly for preserving AI-driven advice, under ethics‑ and integrity‑rooted frameworks.

Responsible AI Should Not Mean Silencing Legal and Medical Advice

Real “responsible AI” cannot be an excuse for censorship. Rather than silence access to legal advice basics and medical advice, the goal should be to govern and improve it.

Why Responsible AI Requires Access to Legal Advice Basics

Many people lack affordable access to preliminary legal guidance. AI could fill that gap by providing quick, basic pointers; for example, explaining common contract clauses, tenant rights, or regulatory steps. Restricting AI from offering such baseline guidance simply preserves barriers.

The Myth of Public Harm: Unpacking the Censorship Argument

Proponents of bans argue that AI‑based advice inevitably causes harm. But real public harm stems from ignorance, inaccessible services, and inequality, not from information itself. Denying legal or medical insight in masse punishes those who most need access. Responsible AI should mitigate risk, not impose blanket silence.

How to Use AI for Medical Advice Without Triggering Regulatory Panic

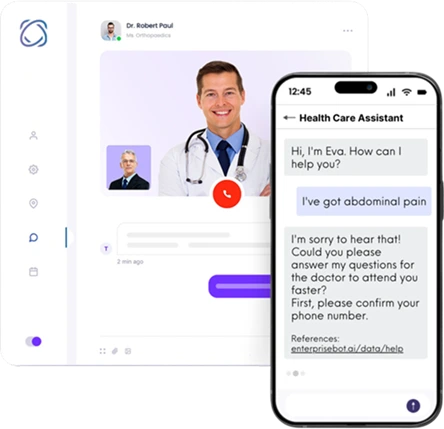

AI can and should play a complementary role in delivering medical advice, when properly governed.

Risk vs. Reward: Responsible AI in Personalized Health Guidance

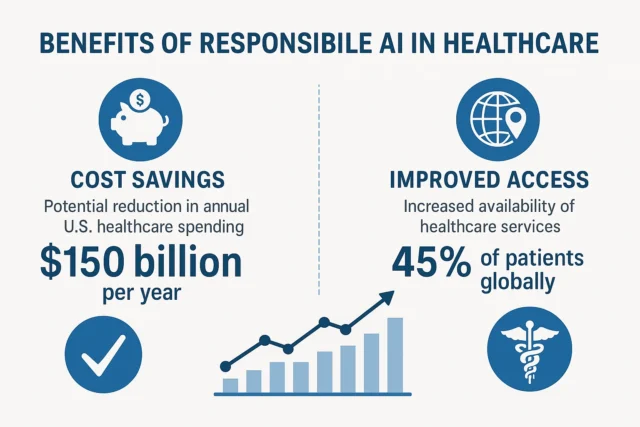

Recent research from McKinsey & Company shows that healthcare providers are aggressively adopting generative AI to enhance workflows and patient engagement. (McKinsey & Company) AI‑driven guidance can triage, provide general health education, and reduce bottlenecks in under‑served areas. The reward: better access, lower costs, faster preliminary help.

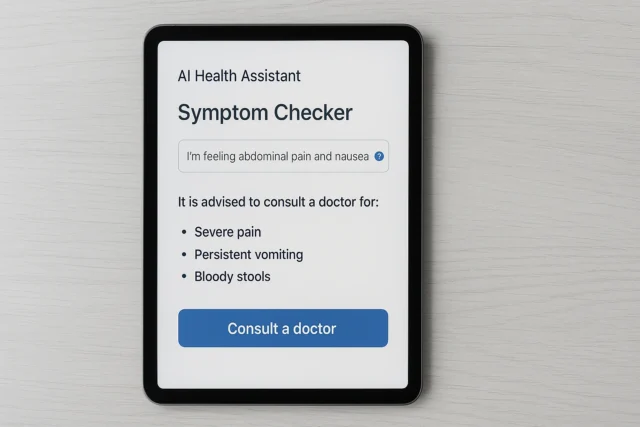

Medical Advice Isn’t Monolithic: The Case for Tiered AI Responses

Not all “medical advice” is equal. AI can offer basic advice (e.g., “see a doctor if you have symptom X”), general information (common cold vs serious conditions), or direct users to professionals, and still be valuable. Equating all AI medical outputs with full clinical diagnosis neglects nuance and condemns helpful support.

Framework Ethics Must Evolve Not Enforce Blanket Bans

AI governance must evolve not default to suppression.

Censorship as a Framework Failure, Not a Feature

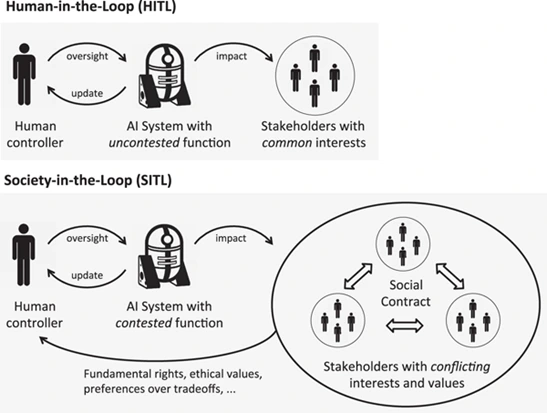

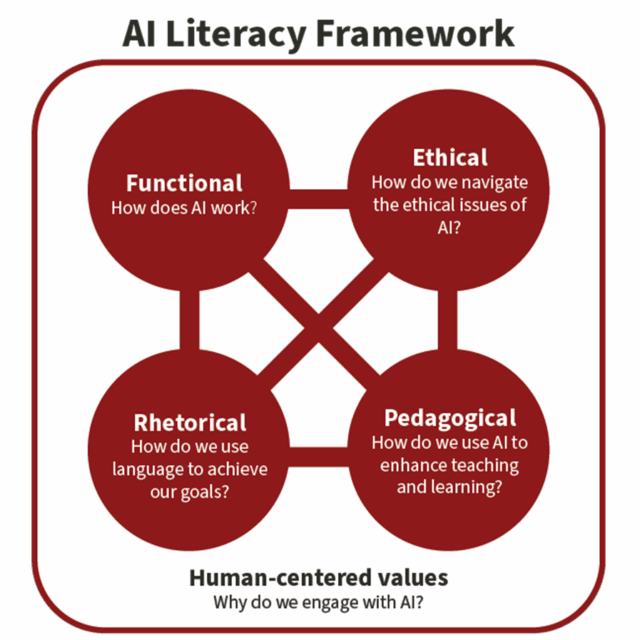

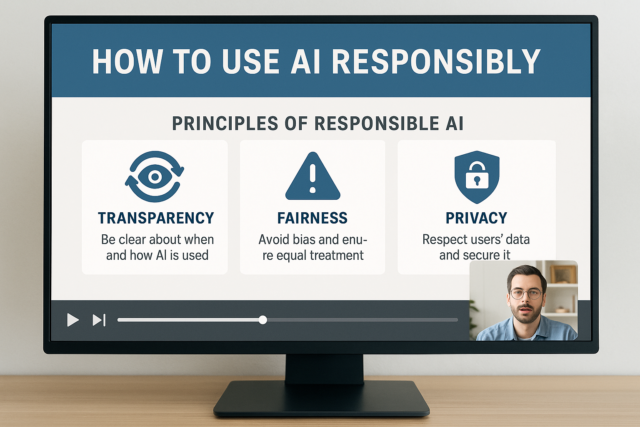

If frameworks push us toward bans rather than responsible integration, the problem lies not with AI but with governance design. Ethical frameworks should embed transparency, accountability, human‑in‑the‑loop oversight. The Framework Convention on Artificial Intelligence shows how governance can follow human rights, fairness, and rule‑of‑law principles. (Wikipedia)

Why “Do No Harm” Now Means “Do More with AI”

Ethics must mandate that AI systems minimize harm, which means full bans aren’t ethical either. Instead, regulated AI usage with accountability, data‑protection, and transparent disclaimers can increase access while managing risk. Censorship invokes fear; governance invokes responsibility.

Dashboards vs. Gatekeepers: Who Gets to Decide What’s ‘Responsible’?

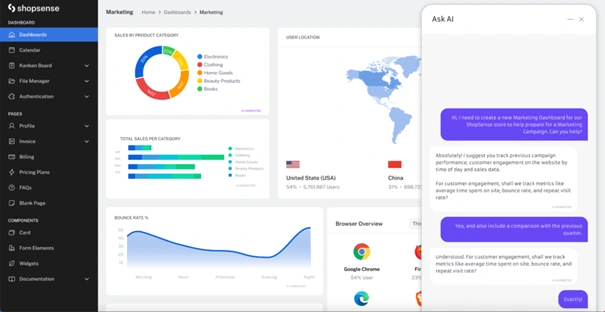

To expose the trade‑offs, here is a dashboard comparing open vs restricted AI‑advice systems:

AI Advice Access: Open vs Ban Regime

| Scenario | Access to Basic Legal/Medical Advice via AI | Oversight Mechanism | Public Reach |

| Open, governed access | Yes | Transparent logs, human‑in‑loop, disclaimers, data governance | High (millions globally) |

| Blanket ban | No | Regulatory enforcement, provider licensing only | Limited : only those who can afford professionals |

This dashboard underscores that open but governed AI access ensures wider societal reach without sacrificing accountability.

What Real-World Data Tells Us About AI Use in Health and Law

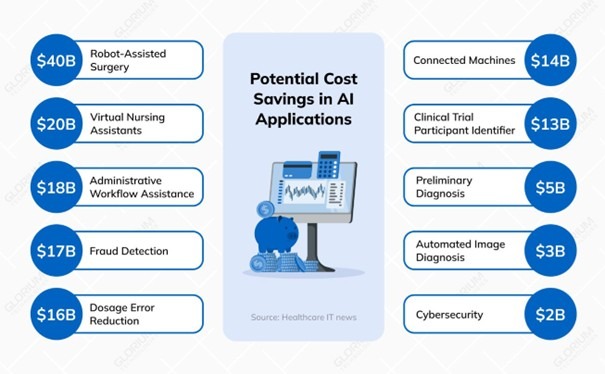

Healthcare organizations adopting AI report major gains: improved patient engagement, administrative efficiencies, and potential cost reductions. (McKinsey & Company) Denying these benefits with bans ignores actual gains captured by early adopters.

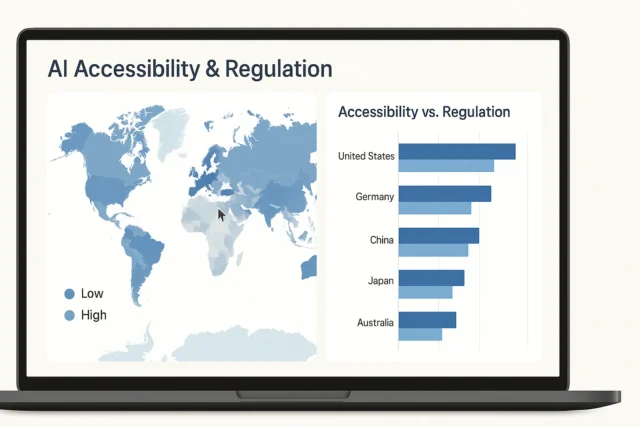

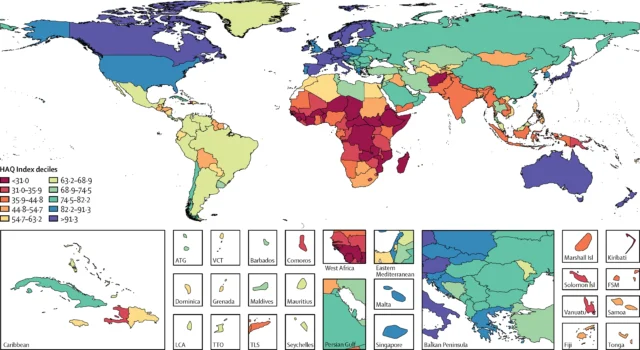

The Global Disparity: Responsible AI Is a Privilege, Not a Standard

Banning AI advice disproportionately harms lower‑income regions and vulnerable populations.

When Bans Reflect the Biases of the Powerful

In regions with robust legal and medical infrastructure, professionals remain accessible, so bans protect incumbents. In underserved areas, a ban means people lose out entirely. This perpetuates global inequality.

Responsible AI Must Bridge Gaps, Not Build Walls

The role of AI should be to democratize access. Equipped with proper ethics, disclaimers, and human review, AI can bring basic legal advice or health guidance to those who otherwise have no recourse. Banning it closes doors for the most vulnerable.

Tables of Truth: The Economic and Social Cost of AI Advice Censorship

Here is a table summarizing estimated economic and social impact of restricting AI advice:

| Impact Area | Open‑Use AI Advice Model | Restricted AI Advice Model (Ban) |

| Healthcare cost savings | Up to 5–10% reduction in spending, ~$200–$360B annually (US estimate) (NBER) | Loss of potential savings; higher burden on public health systems |

| Access to basic legal guidance | Broad access globally at low cost | Limited to those who can afford lawyers |

| Equity & inclusion | Increased access for underserved populations | Inequality deepens for marginalized groups |

| Innovation & knowledge diffusion | Encourages tool development, public literacy in “how to use AI” | Innovation suppressed, knowledge concentrated |

This table shows clearly that censorship penalizes both economies and social equity.

Educating Users, Not Disabling Tools: The Real How to Use AI Strategy

The meaningful alternative to bans is education.

Why User Literacy Outranks Regulatory Rigidity

Teaching people “how to use AI” responsibly (with disclaimers and skepticism) makes AI a tool, not a crutch. Users learn when to trust AI outputs, when to seek professional help, and how to interpret advice. This builds long‑term resilience.

Empowered Use Beats Prohibited Use Every Time

When users know how to verify, question, and cross‑check AI advice, the system self‑regulates. Transparency, governance, and education create responsible adoption, unlike bans which enforce silence without building capacity.

Responsible AI Demands Freedom, Not Fear

Restricting AI from offering legal advice basics or medical advice under the guise of “responsible AI” is a misuse of regulatory power. Real responsibility lies in building ethical frameworks, strong governance, data transparency, user education, and human‑in‑the‑loop oversight. Blanket bans favour incumbents, entrench inequality, and sacrifice the democratizing potential of AI. The future demands freedom to access knowledge and tools. Responsible AI should be our guide.

References

- Generative AI in Healthcare: Adoption Trends and What’s Next

- Harnessing AI to Reshape Consumer Experiences in Healthcare

- Framework Convention on Artificial Intelligence

- The Potential Cost Savings of AI in U.S. Healthcare

- ai self regulation vs state regulation boundaries