Responsible AI: Protection Mechanism or Innovation Bottleneck?

Artificial intelligence has moved from experimental labs into the core infrastructure of modern economies. Algorithms recommend treatments, draft legal arguments, detect fraud, and optimize supply chains. With that power comes an inevitable question. Should governments regulate how machines think and decide? The rise of Responsible AI frameworks suggests the answer is yes. Governments, corporations, and research institutions are rushing to define ethical guardrails for AI systems that increasingly influence human decisions.

Supporters argue that Responsible AI protects society from dangerous automation, especially in sensitive fields such as medical advise and legal advice basics. Critics respond with a blunt warning: regulation could suffocate innovation before its full economic potential emerges. The conflict has become one of the defining technology debates of the decade. Behind the policy language lies a deeper struggle between control and acceleration, governance and experimentation. Whether Responsible AI becomes the foundation of trustworthy innovation or the bureaucracy that slows it down depends on how societies implement it.

Responsible AI and the Governance Revolution

How Responsible AI Frameworks Are Redefining Corporate Accountability

Corporate governance used to treat technology as a technical problem. Security vulnerabilities, system reliability, and compliance audits dominated boardroom discussions. Responsible AI has completely reshaped that logic. Algorithmic systems can influence hiring decisions, medical advise recommendations, and even judicial analysis through tools that automate legal advice basics. Governance can no longer remain confined to IT departments.

Major institutions emphasize this shift. Research highlighted in the Harvard Business Review analysis on responsible AI governance explains that organizations must treat AI ethics as an enterprise-wide responsibility rather than a technical checklist.

Responsible AI governance introduces multidisciplinary oversight involving engineers, legal experts, ethicists, and executives. The goal is simple: ensure algorithmic decisions align with societal values and corporate accountability.

Governance Shift Toward Responsible AI

| Governance Aspect | Traditional Software Governance | Responsible AI Governance |

| Risk focus | Security and compliance | Ethical, social, and algorithmic risks |

| Stakeholders | IT teams | Cross-functional leadership |

| Accountability | Technical ownership | Organizational responsibility |

| Transparency | Limited | Expected and documented |

Why Responsible AI Governance Overlaps with Legal Advice Basics

Once AI systems begin generating outputs that resemble legal advice basics or structured professional analysis, accountability becomes unavoidable. Regulators increasingly demand that organizations clearly distinguish between informational assistance and formal legal counsel. Without explicit boundaries, users may interpret automated responses as authoritative guidance, creating legal liability risks for companies deploying AI systems.

Companies adopting Responsible AI frameworks now create internal oversight policies that address:

- Risk classification of AI systems

- Documentation of training data sources

- Human review mechanisms

- Transparency in automated recommendations

These governance structures are not merely ethical statements. They represent the new compliance infrastructure of the AI economy.

Responsible AI vs Innovation Speed

Do Responsible AI Regulations Slow Technological Progress

Critics argue that Responsible AI introduces friction into the innovation cycle. Startups often operate under rapid experimentation models where products evolve faster than regulatory frameworks. Introducing ethical review committees and documentation processes can appear to slow down the speed of iteration.

In sectors racing toward automation leadership, hesitation can cost billions. Developers fear that strict Responsible AI compliance could discourage risk-taking and reduce experimentation.

However, the debate ignores a fundamental reality. Unregulated AI failures produce reputational disasters that slow innovation far more dramatically than thoughtful governance.

Why Responsible AI Could Accelerate Trustworthy Innovation

A growing body of research suggests that Responsible AI strengthens long-term innovation by increasing trust in automated systems. When organizations integrate ethical governance into AI development, stakeholders gain confidence that algorithms operate transparently and responsibly. Insights from McKinsey & Company research on trustworthy AI adoption show that organizations implementing structured ethical frameworks often scale AI initiatives faster because employees, regulators, and customers trust the systems being deployed. This trust reduces resistance to automation and accelerates adoption across industries.

Responsible AI frameworks therefore allow companies to innovate without triggering societal backlash, regulatory confrontation, or reputational damage. In this context, trust functions as a powerful economic multiplier for AI-driven transformation.

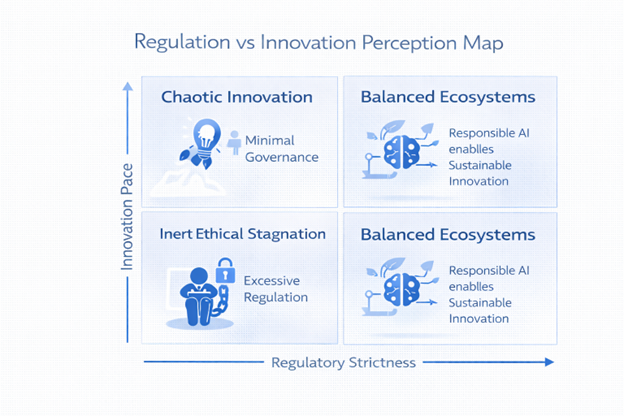

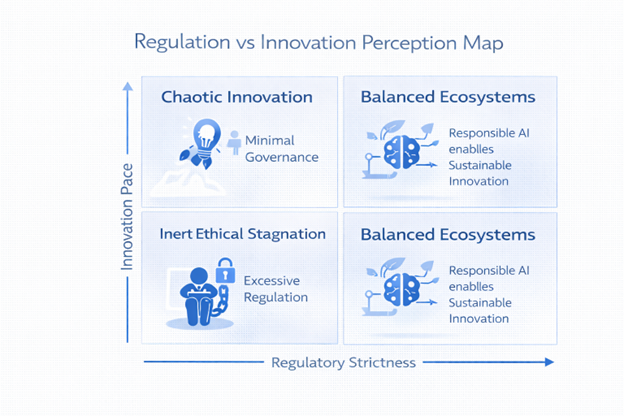

This dashboard visually illustrates how balanced governance produces the most stable innovation environments.

Responsible AI in High-Risk Domains: Medicine and Law

Responsible AI Challenges in Medical Advise Systems

AI-powered diagnostic tools now assist doctors in detecting diseases and recommending treatments. In theory, these systems reduce human error and expand access to healthcare expertise. Yet medical advise generated by algorithms raises obvious ethical concerns. Incorrect recommendations could affect patient outcomes.

Experts from MIT Technology Review’s coverage of AI in healthcare ethics highlight that medical AI systems require transparent training data and human supervision to prevent harmful errors.

Responsible AI frameworks in healthcare emphasize validation protocols, clinical testing, and human oversight.

Responsible AI Risk Comparison Table

| Sector | Responsible AI Risk | Governance Requirement |

| Healthcare | Misdiagnosis from algorithmic bias | Clinical validation frameworks |

| Legal systems | Misinterpretation of legal advice basics | Verified legal AI boundaries |

| Finance | Biased credit decisions | Algorithm auditing processes |

Responsible AI Responsibilities in Legal Advice Basics Tools

Legal technology platforms increasingly integrate AI systems capable of summarizing legal cases, reviewing contracts, and generating legal advice basics for users seeking quick guidance. These tools dramatically improve research speed and accessibility, allowing individuals and businesses to navigate legal information more efficiently. However, despite their analytical capabilities, AI systems cannot replace professional legal judgment or fully interpret the nuances of complex legal contexts.

For this reason, Responsible AI frameworks impose clear governance requirements to prevent misuse and misunderstanding of automated legal outputs.

Responsible AI frameworks therefore require:

- Clear disclaimers on automated legal outputs

- Human legal review mechanisms before critical decisions

- Transparent explanation of AI reasoning and data sources

Without these safeguards, automated legal systems could easily produce misleading interpretations, incomplete analyses, or recommendations that users mistakenly treat as formal legal counsel.

Responsible AI Framework Ethics: Control vs Autonomy

The Rise of Framework Ethics in Responsible AI Development

As AI systems grow more powerful, ethical governance has moved from academic debate into corporate infrastructure. Responsible AI frameworks now guide how organizations design, test, and deploy algorithms.

Institutions such as the World Economic Forum’s Responsible AI guidelines emphasize the need for ethical guardrails that align technology with societal values.

Core framework ethics principles include:

- Algorithmic transparency

- Human accountability

- Bias detection mechanisms

- Clear governance protocols

- Ethical documentation

These frameworks transform AI development into a structured process rather than uncontrolled experimentation.

Why Responsible AI Frameworks Challenge Startup Agility

Despite their benefits, framework ethics introduce operational friction within fast-moving technology environments. Startups built on rapid experimentation cycles often struggle with governance procedures that require documentation, oversight, and ethical review. These additional steps can slow product iterations, forcing young companies to balance innovation speed with compliance and accountability expectations.

Responsible AI frameworks require structured processes such as:

- Data auditing

- Risk classification

- Ethical impact assessments

The result is a structural tension between speed and oversight. Yet as AI becomes central to economic infrastructure, ethical governance becomes unavoidable.

How to Use AI Without Breaking Responsible AI Principles

Responsible AI Guidelines for Businesses Deploying AI Tools

Organizations adopting AI technologies often ask a practical question: how to use AI responsibly while maintaining innovation momentum. Responsible AI frameworks provide operational guidance that balances both objectives.

According to Deloitte’s research on AI governance strategy, organizations that embed ethical guidelines early in the development process avoid costly redesigns later.

Responsible AI Implementation Steps :

- Establish Responsible AI governance committees

- Perform algorithmic risk assessments before deployment

- Audit training datasets for bias and representational gaps

- Define strict boundaries for systems offering medical advise or legal advice basics

- Educate teams on how to use AI responsibly across workflows

Responsible AI transforms AI deployment into a structured management discipline.

Responsible AI Best Practices for Scaling AI Safely

Scaling AI systems across an enterprise requires repeatable governance mechanisms that ensure consistency, transparency, and accountability across departments. As AI expands from isolated pilots to core operational infrastructure, organizations must implement structured oversight processes that guide how systems are deployed and monitored.

Key operational practices include:

- Centralized AI documentation repositories

- Continuous model auditing processes

- Clear escalation procedures for ethical risks

- Cross-functional oversight committees

Responsible AI does not eliminate innovation. It transforms innovation into a disciplined and sustainable organizational capability.

Responsible AI as Competitive Advantage

Why Companies That Adopt Responsible AI Early Gain Trust

Trust has become the currency of the digital economy. Companies that demonstrate transparent AI governance attract customers, regulators, and investors who increasingly demand accountability in automated decision systems. Research published in IBM Responsible AI strategy insights emphasizes that organizations with mature AI ethics programs face fewer regulatory conflicts while strengthening long-term brand credibility. As scrutiny around algorithmic decisions grows, responsible governance signals reliability and competence.

Responsible AI therefore functions not as a regulatory burden but as a powerful market differentiator that strengthens competitive positioning in AI-driven industries.

Responsible AI as the Infrastructure of AI Economies

As AI systems increasingly influence critical decisions such as hiring, lending approvals, medical advise recommendations, and legal advice basics, trust becomes a fundamental condition for adoption. Organizations, regulators, and users must believe that automated systems operate transparently, fairly, and responsibly.

Without this confidence, even highly advanced technologies face resistance from the public and policymakers. Responsible AI frameworks therefore play a crucial role in building credibility and enabling widespread acceptance of AI-driven decision systems across sensitive sectors.

Without this confidence, even highly advanced technologies face resistance from the public and policymakers. Responsible AI frameworks therefore play a crucial role in building credibility and enabling widespread acceptance of AI-driven decision systems across sensitive sectors.

The Inevitable Future of Responsible AI

The debate surrounding Responsible AI often frames regulation as the enemy of innovation. That framing ignores the structural reality of modern technological revolutions. When technologies reach societal scale, governance inevitably follows. Electricity, aviation, pharmaceuticals, and financial systems all underwent the same transformation. AI is simply the latest infrastructure to face the transition from experimentation to accountability.

Responsible AI will not stop innovation. It will determine which innovations survive public scrutiny. Systems offering medical advise or assisting with legal advice basics must meet ethical standards because their consequences extend beyond technical performance. Companies that integrate framework ethics early will scale faster, gain regulatory trust, and avoid reputational disasters.

The real risk is not excessive governance. The real risk is deploying powerful AI systems without responsible oversight. In that scenario innovation does not accelerate. It collapses under the weight of public distrust. Responsible AI therefore becomes the operating system of the AI era, shaping how societies learn how to use AI safely while unlocking its economic power.

References

Harvard Business Review — What Is Responsible AI?

McKinsey & Company — The State of AI: Generative AI’s Breakout Year

MIT Technology Review — AI in Health Care Is Already Changing Medicine

World Economic Forum — AI Governance Alliance Report

Deloitte Insights — AI Governance and Responsible AI Strategy

H-in-Q — AI Killing Billable Hours in Knowledge Services