MLOps at the Crossroads of Scale and Control

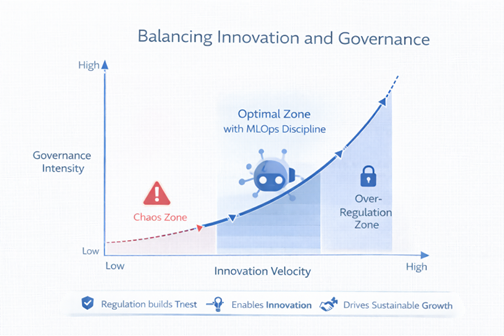

Artificial intelligence no longer lives in research labs. It lives in revenue forecasts, supply chains, credit scoring engines, and marketing automation stacks. As enterprises industrialize AI, MLOps has emerged as the operational doctrine that promises to transform fragile machine learning experiments into scalable production systems. Yet a sharp debate divides executives. Some argue that it is the infrastructure backbone of serious AI. Others claim that it introduces governance overhead that suffocates experimentation and slows innovation. This tension reflects a deeper conflict between speed and control, autonomy and accountability. Organizations that dismiss it as bureaucracy underestimate the complexity of scaling AI. Those that weaponize it as rigid compliance architecture risk killing creativity. The question is not whether it matters. The question is whether it enables scalable intelligence or becomes a procedural cage.

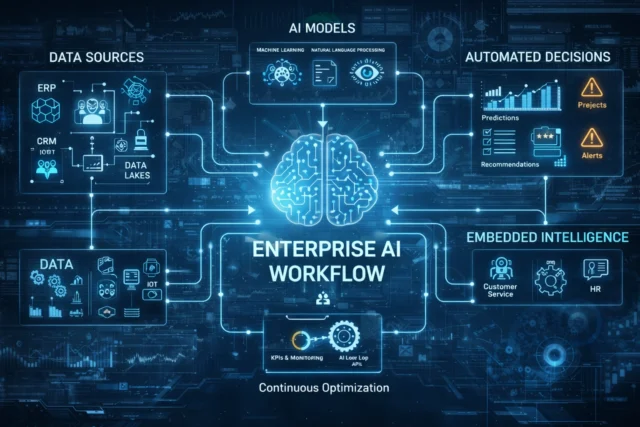

Why MLOps Emerged as the Backbone of Enterprise AI

The Rise of Production-Grade AI

Early AI teams celebrated prototype success without preparing for production chaos. Models trained in notebooks collapsed when confronted with real-world variability. A structured operational approach emerged to close that gap. Inspired by DevOps, it applies structured automation, monitoring, and continuous integration principles to machine learning workflows. According to insights from Harvard Business Review on scaling AI initiatives, organizations struggle not with model accuracy but with operationalization. This framework bridges experimentation and deployment model of cloud computing environments, ensuring models scale across public, private, and hybrid infrastructures. Without it, AI remains theatrical. With it, AI becomes operational infrastructure.

How MLOps Reinvents the Data Pipeline

The traditional data pipeline was linear, brittle, and designed for reporting rather than learning systems. Modern AI systems require adaptive architectures that respond to shifting data patterns and evolving business objectives. MLOps transforms the data pipeline into a living infrastructure by embedding Data Ops discipline, automated validation checkpoints, feature tracking mechanisms, control version governance, and intelligent retraining triggers.

Automation eliminates fragmented handoffs and manual orchestration errors. Data flows in continuous cycles, models recalibrate based on real-world feedback, and performance monitoring becomes proactive rather than reactive. This integration aligns data engineering and machine learning teams under a unified operational framework, delivering systemic transformation instead of isolated optimization.

Traditional ML vs MLOps-Enabled ML

| Dimension | Traditional ML | MLOps-Enabled ML |

| Deployment | Manual release cycles | Automated CI/CD pipelines |

| Data Pipeline | Static and isolated | Continuous and monitored |

| Control Version | Ad hoc experiment tracking | Structured version control |

| Governance | Minimal documentation | Integrated AI governance |

| Scalability | Limited | Cloud-native scalability |

The Case Against MLOps : Does Structure Kill Innovation?

When MLOps Slows Experimentation

Critics argue that heavy operational frameworks introduce structural rigidity that clashes with the experimental nature of machine learning. AI governance committees, layered validation checkpoints, and formal compliance reviews can stretch development timelines and dilute iterative momentum.

Startups engineered for rapid prototyping often view structured operational frameworks as enterprise bureaucracy disguised as operational maturity. When approval hierarchies multiply and documentation requirements expand, model releases slow and creative risk-taking declines. Innovation thrives on speed, autonomy, and fast failure cycles. If governance intensity outpaces experimentation capacity, teams shift focus from building models to navigating process. The risk is tangible: excessive oversight can stall progress and suppress breakthrough thinking.

The Bureaucratization of Automation

Automation promises acceleration, efficiency, and scale. Yet when organizations accumulate excessive tooling, overlapping platforms, and redundant compliance dashboards, friction replaces speed. DevOps principles, applied mechanically to AI without contextual adaptation, can impose architectural complexity that overwhelms teams. Tool sprawl fragments ownership and blurs accountability. Engineers shift their attention from refining models to maintaining pipelines and debugging integrations.

As highlighted in MIT Technology Review’s analysis of AI operational complexity, many enterprises overinvest in infrastructure before validating business use cases. In such environments, operational discipline turns performative. Governance frameworks expand, dashboards multiply, but measurable value creation stalls behind procedural noise.

MLOps as a Strategic Lever for AI Governance

Responsible AI Governance

AI governance is no longer optional in a climate of regulatory scrutiny, public skepticism, and reputational exposure. Organizations deploying AI systems must demonstrate traceability, explainability, and accountability at every stage of the lifecycle. A mature operational framework embeds control version systems, structured audit trails, automated documentation, and model lineage tracking directly into workflows. This creates end-to-end transparency from raw data ingestion to deployed model behavior.

The World Economic Forum’s framework on responsible AI emphasizes that accountability mechanisms must be embedded within technical infrastructure, not layered on afterward. This operational model delivers that embedded discipline, transforming governance from abstract policy into enforceable operational reality.

MLOps in Regulated Industries

Financial institutions and healthcare providers cannot deploy opaque models. They require explainability, monitoring, and rollback capabilities. Structured lifecycle management enables reproducibility and model tracking essential for audits. Data lineage within the data pipeline ensures regulatory alignment. Instead of slowing innovation, structured operational governance reduces risk exposure and accelerates approval processes in regulated sectors.

Governance Maturity Stages Enabled by MLOps

| Stage | Characteristics | MLOps Role |

| Initial | Experimental AI | Minimal structure |

| Managed | Documented processes | Version control introduced |

| Integrated | Cross-functional governance | Automated audit trails |

| Optimized | Continuous monitoring | Full AI governance integration |

The Convergence of MLOps, DevOps, and Data Ops

From DevOps to MLOps to Data Ops

Operational silos fracture AI performance by separating data engineering, software development, and machine learning into disconnected workflows. DevOps transformed software delivery through continuous integration and deployment. Data Ops strengthened data reliability and governance across analytics environments. Structured machine learning lifecycle management unifies both disciplines under coordinated processes, eliminating fragmentation. This convergence produces synchronized pipelines where data ingestion, feature engineering, model training, validation, and deployment function as an integrated system rather than isolated tasks.

McKinsey research on scaling AI shows that organizations aligning cross-functional AI operations consistently outperform competitors. Structured lifecycle management institutionalizes this alignment, converting coordination into repeatable operational advantage.

Cloud Deployment Model of Cloud Computing and MLOps Scalability

MLOps thrives within cloud-native architectures that provide elasticity, resilience, and distributed processing power. Public cloud environments accelerate experimentation by offering rapid provisioning and scalable compute resources. Private cloud infrastructures reinforce control, security, and regulatory alignment. Hybrid models balance flexibility with compliance demands. The deployment model of cloud computing ultimately determines how machine learning models scale across regions, departments, and products, while automation orchestrates continuous retraining, monitoring, and lifecycle management.

Automation vs Autonomy : Who Controls the AI Factory?

Automation as the Core Promise of MLOps

Automation defines mature MLOps environments. Core automated processes include:

- Continuous integration and deployment for ML models

- Automated data validation within the data pipeline

- Model drift detection and retraining triggers

- Performance monitoring dashboards

- Control version tracking across datasets and models

Automation reduces human error, shortens feedback loops, and strengthens operational resilience across the machine learning lifecycle. Without structured automation, response cycles lag behind data shifts and market changes. IBM’s insights on AI automation frameworks emphasize that sustainable operational AI depends on end-to-end automated lifecycle management embedded directly into production systems.

Human Oversight in an Automated MLOps World

Total automation invites strategic and ethical risk when decision systems operate without contextual judgment. Algorithms optimize for performance metrics, not societal consequences or brand reputation. Governance checkpoints and clearly defined escalation paths ensure that human supervision intervenes when ethical, legal, or high-impact business decisions arise. MLOps embeds these safeguards directly into operational workflows, creating structured pause points rather than reactive crisis management. This balance preserves algorithmic efficiency while reinforcing executive accountability. Organizations that ignore human oversight expose themselves to regulatory backlash and reputational damage. The future of AI operations depends on disciplined collaboration between automated systems and empowered decision-makers who retain ultimate responsibility.

Building a High-Performance MLOps Data Pipeline

Designing a Scalable Data Pipeline

A resilient data pipeline demands architectural clarity and disciplined integration across every stage of the machine learning lifecycle. Core components include scalable ingestion layers, automated validation modules, governed feature stores, controlled training environments, deployment orchestration frameworks, continuous monitoring systems, and closed-loop feedback integration. Data Ops principles enforce data quality, consistency, and traceability before models reach production. Cloud-native deployment model of cloud computing infrastructures deliver the elasticity and distributed processing required for sustained performance at scale.

Version Control and Reproducibility in MLOps

A resilient data pipeline demands architectural clarity and disciplined integration across every stage of the machine learning lifecycle. Core components include scalable ingestion layers, automated validation modules, governed feature stores, controlled training environments, deployment orchestration frameworks, continuous monitoring systems, and closed-loop feedback integration.

Data Ops principles enforce data quality, consistency, and traceability before models reach production. Cloud-native deployment model of cloud computing infrastructures deliver the elasticity and distributed processing required for sustained performance at scale.

7-Step Enterprise MLOps Pipeline Structure

- Data ingestion

- Automated validation

- Feature engineering

- Model training

- Testing and evaluation

- Deployment automation

- Continuous monitoring and retraining

Is MLOps the Future of AI or an Enterprise Comfort Blanket?

The Competitive Advantage of Mature Operational AI

Organizations with mature MLOps capabilities operate with structural confidence and measurable competitive advantage. They institutionalize repeatability, accountability, and scalability across the entire AI lifecycle. Instead of treating machine learning as isolated experimentation, they embed it into core operations. These organizations consistently demonstrate:

- Faster production deployment

- Lower operational risk

- Integrated AI governance

- Scalable automation across cloud infrastructures

Each capability compounds the others. Speed increases without sacrificing control. Risk declines without slowing innovation. Governance becomes embedded rather than reactive. Automation scales without chaos. In this context, structured operational AI does not merely support AI initiatives. It transforms AI from an experimental cost center into a durable strategic asset.

Organizations That Resist MLOps

Companies resisting MLOps often claim that creativity thrives in unstructured environments where experimentation moves faster than process design. However, unmanaged data pipeline workflows accumulate technical debt that quietly erodes scalability. Without control version discipline, reproducibility disappears and model performance becomes unreliable.

The absence of embedded AI governance increases exposure to regulatory scrutiny and reputational damage. Innovation detached from operational discipline rarely survives sustained growth or enterprise-level complexity.

Comparative Snapshot

- With MLOps: structured automation, scalable deployment, governance readiness

- Without MLOps: fragmented workflows, hidden risk, limited scalability

MLOps Is the Price of Serious AI

The debate surrounding MLOps reveals a false dilemma. Innovation does not collapse under structure. It collapses under operational fragility. This framework is not administrative excess. It is the infrastructure that transforms machine learning from experiment to enterprise capability. Organizations that reject it chase speed but sacrifice resilience. Those that implement disciplined operational frameworks unlock automation, governance alignment, scalable cloud deployment model of cloud computing architectures, and durable competitive advantage. AI without operational discipline remains tactical. AI with it becomes strategic infrastructure. The future of enterprise intelligence belongs to organizations that treat it as the operating system of AI. Scalable intelligence demands structure. The winners will not debate it. They will master it.

References

Harvard Business Review – How to Scale AI Across the Enterprise

McKinsey – The State of AI and Scaling AI in Organizations

MIT Technology Review – The Operational Challenges of AI Deployment

World Economic Forum – Responsible AI Framework

IBM – AI Automation and Operational AI Insights

H-in-Q – Open Source MLOps: Innovation or Security Risk?