The term MLOps has become the battleground between automation and human insight. Organizations are torn, some argue removing human checkpoints accelerates model deployment, others warn this frees chaos rather than control. In this age of data pipeline complexity, deployment model of cloud computing, data Ops demands, control version challenges, and heightened AI governance, the real question emerges: is human involvement in MLOps a strategic safety net or a crippling bottleneck? The following analysis takes a definitive stance; human involvement remains critical, but only when applied selectively and with automation that respects scale.

The Core Role of Human Oversight in MLOps

From Deployment to Decision : Where Humans Fit in the MLOps Lifecycle

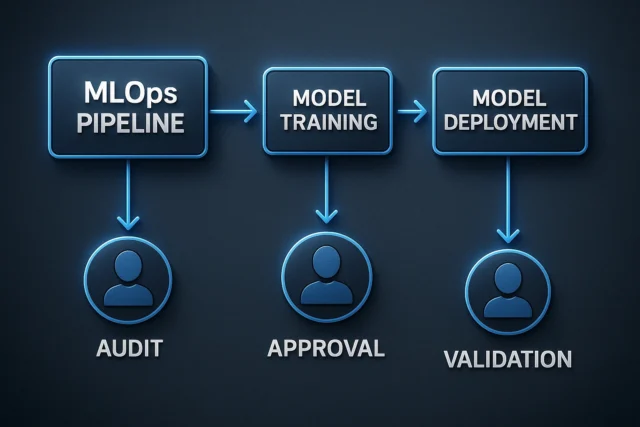

Human presence in an MLOps lifecycle acts as a gatekeeper for high-risk decisions. According to process control frameworks in MLOps, humans are required for sign-offs, audits, and pre-production verification steps. (Dataiku Knowledge Base) In practice, the human touch adds contextual judgment, especially when models impact complex systems or ambiguous data. Key human intervention points include:

- Approving final model deployment in regulated environments

- Validating training data against domain-specific ethical risks

- Investigating performance degradation post-deployment

- Interpreting anomalies or edge-case predictions before escalation

However, if every stage is bottlenecked by manual approvals, the pipeline becomes slow and fragile. The right balance: automation handles routine tasks; humans intervene where nuance matters.

MLOps and the Data Pipeline : Precision or Paralysis?

Why Human Intervention in Data Ops May Improve or Impede Flow

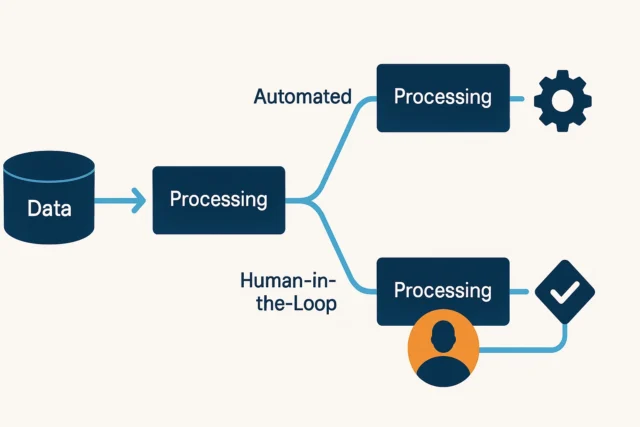

In any MLOps system, the data pipeline forms the operational backbone, it is where raw data becomes insight and machine learning models draw their predictive power. Every transformation, from ingestion to feature engineering, affects model integrity. This makes human intervention in the pipeline a high-stakes decision. Over-engineering manual checkpoints can stall innovation, while under-monitoring opens the door to silent failures and bias propagation. Therefore, interventions must be surgical, not habitual. Organizations need to apply human oversight precisely where anomalies, ambiguity, or ethical risk occur. Otherwise, the data pipeline ceases to be a strategic asset and becomes an operational liability.

Automated vs Human-in‑the‑Loop Data Pipelines

| Dimension | Fully Automated Pipeline | Human‑in‑the‑Loop Pipeline |

| Throughput | High | Lower |

| Latency | Minimal | Increased |

| Quality control | Automated checks | Manual review + insights |

| Adaptability to edge cases | Weak | Strong |

| Scalability | Excellent | Challenged |

When a data Ops pipeline is completely automated, throughput and scalability are high, but subtle errors, biases or drift might slip through. Conversely, a human‑in‑the‑loop approach boosts contextual accuracy but risks delaying deployment. In MLOps, the decision point is: which layer merits human judgement vs which can be safely automated?

Automation vs Accountability : The DevOps Paradox in MLOps

Can DevOps Principles Scale With Human Checks Still in Place?

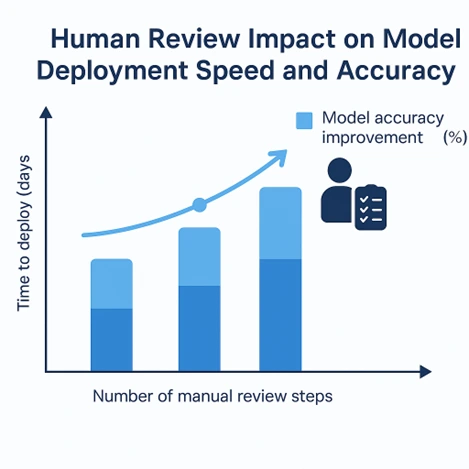

Modern MLOps borrows heavily from traditional DevOps automation, CI/CD, and rapid iteration, embracing continuous integration, seamless delivery, and iterative experimentation. However, unlike classic DevOps, deploying machine learning models introduces non-deterministic behavior, unpredictable drift, and opaque decision-making, making human intervention essential, especially during validation and governance stages. Medium+1

When human review increases accuracy by, say, 4 %, but deployment time doubles, the business question becomes: is the trade‑off worth it? In high‑risk domains, yes. In fast‑moving consumer applications, less so. MLOps teams must weigh automation efficiency against governance risk.

Control Version in MLOps : Gatekeeping or Guardrails?

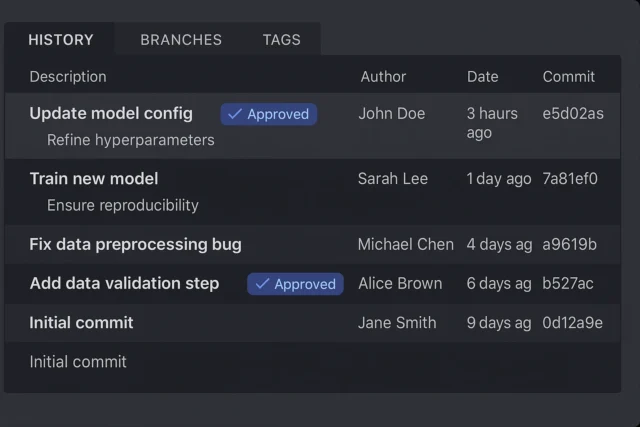

Version Control as a Tool for Trust or Bureaucratic Slowdown

Effective MLOps depends on robust version control across code, data, features, and models. (ml-ops.org)

It ensures traceability, reproducibility, and auditability throughout the lifecycle.

Without it, teams risk silent drift, inconsistent results, and compliance failures.

Version control aligns DevOps and Data Ops, enabling structured collaboration.

It’s not optional, it’s foundational to maintaining operational integrity.

Benefits vs Limitations of Human‑Involved Version Control

| Benefit | Limitation |

| Enhanced audit trail and reproducibility | Slower approvals |

| Traceability for AI governance | Bottlenecks in rapid iteration |

| Improved alignment across teams (DevOps + Data Ops) | Risk of over‑governance lowering agility |

Having humans in version control review loops adds trust and traceability, essential for compliance. But when every update requires weeks of sign‑offs, the MLOps pipeline becomes fragile. The key is selective human checkpoints at high‑impact version changes rather than at every commit.

AI Governance Within MLOps : Human Judgment or Machine Learning Law?

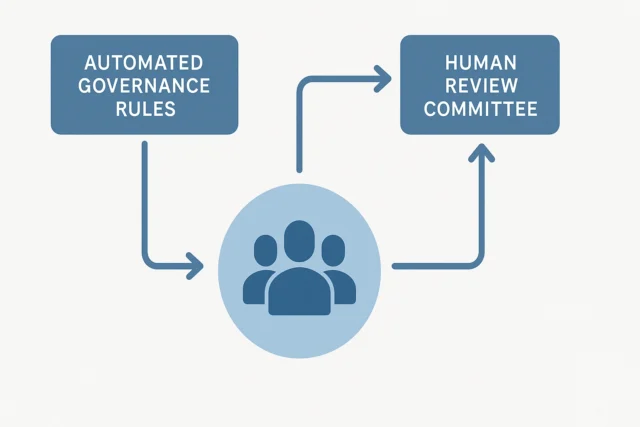

When to Trust the Code vs the Committee

Governance is the clash of automation and human responsibility. With MLOps deeply integrated into business operations, systems must deliver while maintaining ethical, legal and reputational standards. (ml-ops.org)

- Humans drive ethical reviews, bias detection, edge‑case judgement.

- Machines drive monitoring, alerts, automated retraining triggers.

If governance is treated purely as automated policy enforcement, nuance is lost. If treated purely as human review meetings, speed suffers. MLOps must embed human judgement in the loops that matter and automation everywhere else.

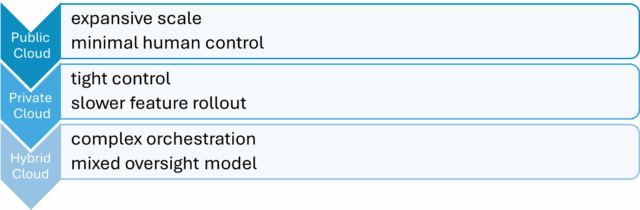

The Deployment Model of Cloud Computing in MLOps : Centralized Control or Decentralized Chaos?

How Cloud Models Shape Oversight and Automation

Public cloud environments are designed for speed, elasticity, and cost efficiency, making them ideal for automating most stages of the MLOps pipeline. However, this often comes at the cost of human context, model decisions may scale rapidly without sufficient scrutiny. In contrast, private and hybrid cloud environments offer more control, enabling organizations to insert human judgment into sensitive steps such as model approval, data versioning, or compliance reviews. The trade-off is agility.

The most effective MLOps strategy does not choose one extreme. It applies automation where reliability is proven, and activates human oversight where domain context, risk, or ethics demand it.

MLOps : Why the Human Touch Ultimately Strengthens AI

Automation in MLOps is not a threat to the human element, it is its amplifier when applied correctly. Human oversight is not obsolete; it is essential for governance, version control, and edge‑case judgement in data pipelines, cloud deployments and DevOps‑inspired workflows. But when human checkpoints are overused, they become bottlenecks that turn MLOps into shipwrecked opportunity. The future of MLOps is not human‑less automation, it’s smart automation with strategic human touchpoints that turn models into business assets and not costly experiments.

References

- Process Governance in MLOps – Dataiku Knowledge Base

- MLOps Principles and Versioning – ml-ops.org

- Model Governance in MLOps – ml-ops.org

- Best Practices for MLOps in 2025 – Medium

- h-in-q.com/blog/