Most AI market research tools will tell you that Synthetic Data Market Research is the future. They won’t tell you it has four distinct personalities and three of them will mislead you if you don’t know which one you’re dealing with.

The promise is real: AI-generated synthetic data can speed up study design, protect respondent privacy, and let you stress-test a $200,000 research programme before a single real human sees your survey. But the same technology, applied to the wrong problem, produces what looks like insight and functions like noise. The difference is not the tool. It is the use case.

This article maps the four positions on the synthetic data sliding scale; Evangelist, Optimist, Hesitant, and Rejector, so you know exactly when to reach for synthetic data, when to proceed with caution, and when to refuse it entirely. At H-in-Q.com, our AI research practice runs on this framework across every mixed-methods engagement we run for clients.

View h-in-q’s smart AI market research solutions

What Is Synthetic Data in Market Research? The Definition That Actually Matters

Synthetic data in market research is AI-generated information that statistically mirrors a real respondent population without containing any actual personal data. A synthetic dataset preserves the correlations, distributions, and behavioral patterns of the original data while being mathematically untraceable to any individual respondent.

This matters because market research sits at an uncomfortable intersection: you need rich, granular data to make confident decisions, and you need to protect the privacy of the people who gave you that data. Synthetic data is the technical solution to that tension, but only when the synthetic model captures genuine statistical signal rather than fabricating it.

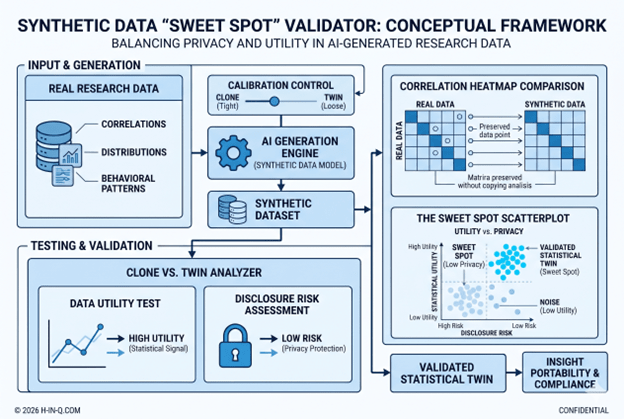

The critical distinction is between a Statistical Twin and a clone. A clone reproduces individual records. A twin reproduces the relationships between variables; the forest, not each specific tree. Knowing which you have built is the first analytical discipline of synthetic data work.

Why the Synthetic Data Debate Is the Wrong Conversation to Have in 2026

Researchers asking, “Is synthetic data good or bad?” are asking the wrong question. The right question is: good or bad for what, specifically?

According to Gartner’s 2024 AI in Market Research report, over 67% of enterprise research teams now have synthetic data tools available to them, but fewer than 22% have a documented policy on when to use them. That gap is where bad decisions get made. A researcher with no framework will reach for synthetic data because it is fast and cheap, not because it is appropriate.

The absence of a use-case framework is the primary driver of synthetic data misuse in commercial market research. Tools are neutral; methodology is not.

The real cost of misuse is not just a bad dataset. It is a business decision made on fabricated confidence. When a product team launches a concept because synthetic data showed 73% purchase intent and that number was generated by amplifying 40 real responses into 4,000 mathematical echoes, the error does not stay in the research report. It moves into the P&L.

This is why the sliding scale framework exists, not to position synthetic data as good or evil, but to map exactly which problems it solves and which problems it manufactures.

The Four Positions on the Synthetic Data Sliding Scale: A Practitioner’s Framework

Position 1 : The Evangelist: Strategic Design & Pre-Flight Validation

Be an Evangelist when synthetic data functions as a strategic design partner before you spend a dollar on live fieldwork.

This is synthetic data at its most powerful and most defensible. Three specific applications define this position.

Step 1: Category Discovery and Entry Point Mapping. Before locking a questionnaire, run synthetic populations through a structured Category Entry Point (CEP) exercise. In a bottled water study, for example, the question is not “why do you drink water?”:it is “what moments in your day create the need for hydration?” A synthetic population built from behavioral and segmentation data will surface consumption moments your research team has not anticipated. This expands the questionnaire’s frame of reference before real fieldwork begins, ensuring you do not ask 2,000 respondents the wrong questions at $4 per complete.

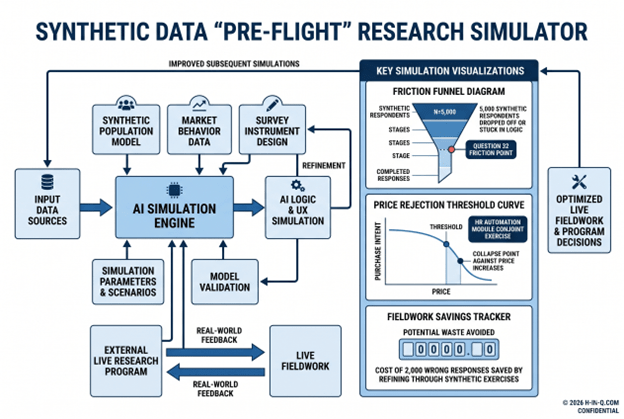

Step 2: Technical Stress Testing (The Flight Simulator Model). A well-designed questionnaire is an engineering product. Running 1,000 synthetic respondents through your survey before launch is a flight simulator; it will catch logic failures, skip patterns that create dead ends, response scale fatigue at question 32, and UX friction that increases drop-off rates. None of this requires real human responses because you are testing the instrument, not the market.

Step 3: New Product Wind-Tunnel Testing. Launching a concept into a synthetic market built from historical purchase data reveals structural breaking points, specifically price rejection thresholds and feature-value mismatches early and cheaply. A client testing a new HR automation module ran 5,000 synthetic “employees” through a conjoint exercise before live fieldwork. The synthetic pass revealed that the value proposition collapsed at a price point 18% below their planned launch price. That discovery cost less than a single focus group.

The evangelist position is defensible because the synthetic data is never presented as market truth; it is scaffolding for a better research design.

Position 2 : The Optimist: Privacy-Compliant Data Portability

Be an Optimist when synthetic data solves a real cross-border data governance problem, provided the Privacy-Utility Trade-off is correctly calibrated.

The GDPR (in Europe) and the CNDP (Morocco’s Commission Nationale de contrôle de la Protection des Données à caractère personnel) both impose restrictions on transferring personal data across jurisdictions. For a research organization operating across MENA and the US, sharing a raw dataset of 800 Moroccan consumer responses with a US analytics team is a compliance exposure even with consent, even with anonymization.

A correctly built statistical twin solves this problem by sharing the insight without sharing the individuals.

The calibration challenge is what practitioners call the Clone vs Twin Problem. If a synthetic model is calibrated too tightly to the original data, it produces clones: the output is effectively an anonymized copy of each respondent, which provides no privacy protection and no analytical value beyond the original. If calibrated too loosely, it generates noise: statistically valid distributions that have no meaningful relationship to the actual market.

The value of synthetic data for privacy-compliant portability lives in the Sweet Spot of Generalization in the zone where the model captures variable correlations without memorizing individual records. A dataset that correctly reproduces the relationship between “frequency of AI tool adoption” and “organizational size” without reproducing any specific company’s profile is a compliant, useful statistical twin. This allows research insights to move between jurisdictions while the personal data stays where it was collected.

Position 3 : The Hesitant: Classical Statistics vs. ML Hype

Be Hesitant specifically, skeptical when the proposed use of ML-based synthetic generation is justified by technical novelty rather than analytical need.

Missing data imputation is the primary arena where this tension surfaces. When a survey dataset has 12% item non-response on a key attitudinal scale, the remediation options are: listwise deletion, mean substitution, multiple imputation by chained equations (MICE), or ML-based generative imputation.

The ML option is the most technically impressive. It is not always the most analytically appropriate.

When data quality is high, meaning the missingness is random rather than systematic, and the observed data is structurally sound, classical imputation models (linear regression for continuous variables, logistic regression for categorical) match ML performance on predictive accuracy while offering full methodological transparency. A research director defending results to a Chief Marketing Officer needs to explain the analytical choices. “We used multiple imputations by chained equations, which assumes MAR missingness and has a 40-year methodological literature” is a defensible position. “We used a generative adversarial network to fill the gaps” is not.

If a transparent classical statistical model adequately solves the imputation problem, the preference for ML is an aesthetic choice, not an analytical one. Aesthetic choices in quantitative research create audit risk.

The hesitance is not a rejection of ML. It is a demand for the right tool for the right problem, the same discipline that governs the entire sliding scale.

Position 4 : The Rejector: The Sample Size Inflation Fallacy

Be a firm Rejector when synthetic data is proposed as a mechanism for inflating sample size to achieve statistical significance that the real data does not support.

This is the most common and the most dangerous misuse of synthetic data in commercial market research, and it deserves a direct treatment.

The fallacy works like this: a research team collects N=30 responses on a niche B2B topic; an honest, resource-constrained sample. Someone then proposes generating N=2,970 synthetic records to “complete” the sample to N=3,000, achieving the statistical power needed for subgroup analysis. The synthetic data, they argue, introduces “random noise” to avoid perfect cloning. Therefore, the 3,000-record dataset is analytically valid.

This argument is wrong at the level of fundamental statistical logic.

A synthetic model trained on 30 responses can only reproduce patterns that exist in those 30 responses. Adding noise changes the surface variance; it does not add new information. The 2,970 synthetic records are mathematical transformations of the same 30 real data points. Running subgroup analysis on that dataset is not more statistically powerful than running it on the original 30; it is identically powered, with 98 times as many decimal places.

Worse, the inflated dataset creates a false confidence signal. A chi-square test on N=3,000 will produce p-values that look significant. The significance is an artifact of artificial sample size, not evidence of real market differences. Decisions made on that basis are made on fabricated confidence.

Turning N=30 into N=3,000 adds volume and zero new human truth. The signal does not get stronger; only the noise gets louder.

The correct response to an underpowered sample is a better sample, not synthetic amplification. If live fieldwork is not possible, the honest research position is a clearly labeled exploratory study with directional findings, not a statistically inflated dataset that misrepresents its own evidential weight.

How AI Is Transforming Synthetic Data Applications for Research Teams in 2026

The evolution of large language models and generative AI has genuinely expanded the legitimate use cases for synthetic data in market research, but it has also expanded the surface area for misuse. Understanding the difference requires separating three layers of AI application.

Layer 1: Generative AI for Instrument Design. LLM-assisted questionnaire construction is now standard in enterprise research workflows. Tools that use AI to flag leading questions, detect scale fatigue, and model respondent cognitive load before fieldwork are producing measurably better instruments. This is not controversial; it is quality control applied earlier in the process.

Layer 2: Synthetic Populations for Segmentation Simulation. The most promising frontier in AI-augmented research is using synthetic populations, built from aggregated behavioral, demographic, and attitudinal data; to simulate how different segments will respond to new stimuli before live testing. This is not replacing respondents. It is building a smarter sampling frame and a more targeted screening instrument. The synthetic simulation informs the real study; it does not substitute for it.

Layer 3: AI-Generated “Respondents.” This is where the methodology becomes contested. Several platforms now offer fully AI-generated survey responses, marketed as synthetic respondents. For highly structured, knowledge-based tasks; testing whether a user can navigate a product configuration flow, for example AI respondents have demonstrated acceptable validity. For attitudinal and preference research “how do you feel about this brand?” or “would you pay more for this feature?” The validity evidence does not currently support commercial deployment.

At H-in-Q.com, our position is that AI-generated respondents are an appropriate tool for cognitive usability testing and logic validation. They are not an appropriate source of market truth on questions that require lived experience to answer.

Tools and Resources for Synthetic Data in Market Research (2026)

Mostly.AI : synthetic data generation platform with privacy audit trail. Strong for GDPR-compliant data portability. Paid, enterprise tier.

Gretel.ai : developer-oriented synthetic data SDK with differential privacy controls. Best for teams with data engineering capability. Freemium + paid.

Synthesized : enterprise synthetic data platform with statistical validation scoring. Includes the Clone/Twin calibration diagnostic that operationalizes the Sweet Spot framework. Paid.

Python (SDV Library) : open-source Synthetic Data Vault. Covers tabular, relational, and time-series data. Full methodological transparency. Free.

KNIME Analytics Platform : workflow-based statistical imputation including MICE. Best for teams preferring classical imputation over ML. Free, with commercial add-ons.

👉AI market research methodology

👉AI-powered analytics tools for MENA teams

👉BuzzPulse-in-Q for research intelligence

👉10 ways AI is changing consumer research in 2026

👉AI market research for small buisnesses

FAQ: Synthetic Data in Market Research

Synthetic Data in Market Research

What is the difference between synthetic data and anonymized data?

Anonymized data removes identifiers from real respondent records while preserving the original responses. Synthetic data is entirely AI-generated, no real response is present in the output. Anonymization protects privacy at the individual level; synthetic data eliminates personal data entirely by replacing it with statistically equivalent artificial records.

Can synthetic data be used to comply with GDPR in cross-border research?

Yes, when correctly calibrated. A synthetic dataset that captures statistical relationships without reproducing individual records qualifies as non-personal data under GDPR Article 4, making it transferable across jurisdictions without data processing agreements. The key condition is that the model does not memorize individual records, a criterion that requires formal validation, not just vendor assurance.

How do you know if your synthetic data model is in the "Sweet Spot" of generalization?

Run a disclosure risk assessment alongside a utility test. Disclosure risk measures how closely synthetic records resemble real ones (low risk = good privacy). Utility measures whether the synthetic data reproduces the key statistical relationships present in the original (high utility = analytically valid). A well-calibrated model scores low on disclosure risk and high on utility simultaneously. Most enterprise platforms (Mostly.AI, Synthesized) include these diagnostics.

Is it ever acceptable to use synthetic data to increase sample size?

No, not for statistical inference. Adding synthetic records to increase N does not add statistical power because the additional records contain no new information. They are mathematical transformations of the existing data. If you need a larger sample for valid subgroup analysis, the only legitimate path is additional real fieldwork. Synthetic inflation creates false confidence in underpowered data.

What is a Statistical Twin in market research?

A Statistical Twin is a synthetic dataset that reproduces the correlation structure and distribution properties of a real dataset without reproducing any individual record. Unlike a clone (which copies individuals), a twin captures the relationships between variables, the patterns that drive insight, while providing full privacy protection. The term distinguishes high-quality synthetic data from low-quality replication.

When should a researcher choose classical imputation over ML-based synthetic imputation?

When data quality is high, missingness is random (Missing At Random, or MAR), and methodological transparency is required for stakeholder reporting. Classical methods like Multiple Imputation by Chained Equations (MICE) are well-validated, auditable, and produce results that can be clearly explained to non-technical decision-makers. Reserve ML-based generative imputation for complex datasets where classical models demonstrably underperform.

Conclusion

Synthetic data is not a single tool; it is a spectrum of techniques with radically different validity profiles depending on where and how you deploy them. The sliding scale framework offers a practical decision logic: Evangelist for pre-flight design, Optimist for privacy-compliant portability, Hesitant for classical-vs-ML imputation choices, and a firm Rejector position on sample size inflation.

The researchers who use synthetic data well treat it as scaffolding, not structure. It builds better instruments, protects respondent privacy across borders, and surfaces breaking points before expensive fieldwork begins. It does not replace the 800 real consumers who tell you something you did not expect, because nothing does.

If your team is navigating the methodology decisions that come with integrating AI into a research workflow, where synthetic data fits, where live data is non-negotiable, and how to defend either choice to a board, that is exactly the kind of programme H-in-Q.com is built to run with you. Book your free AI research strategy session →

The market has enough studies built on manufactured confidence. Build the ones that hold up.