Note: This case study is hypothetical. It is based on industry-verified benchmarks. Results cited reflect outcomes across similar AI market research implementations.

Every research director who has commissioned a traditional brand tracking study knows the feeling three weeks into a six-week project: the market has already moved. The data you are paying for is aging in real time, and by the time the report lands, half of what it describes is already history.

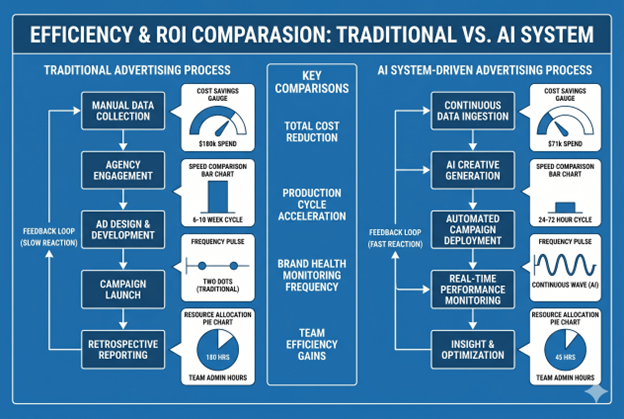

That was the situation facing the marketing director of a mid-sized FMCG brand operating across Morocco, Tunisia, and the UAE in early 2024. The company ran a strong consumer product portfolio across personal care, household, and food categories, and invested approximately $180,000 annually in market research across three primary studies: a semi-annual brand health tracker, an annual consumer segmentation study, and quarterly ad concept testing. The research was credible, methodologically sound, and consistently delivered six to ten weeks after commissioning. It was also consistently too slow to act on in a market moving at the speed of social conversation.

What happened when they replaced the majority of that traditional research stack with an AI-powered alternative is the subject of this case study.

See our AI Market research conversational solution

The Problem: Research That Arrived After the Moment Had Passed

The company’s research challenge had three distinct components that together were costing the brand more than the research budget line suggested.

Component 1: Speed lag on brand health. The semi-annual brand tracker took eight weeks from commissioning to final report. By the time sentiment data arrived, product launches, competitor activity, and regional media events had already shaped consumer perception in ways the tracker could not capture. The brand was measuring brand health at two snapshots per year while the market moved continuously.

Component 2: Concept testing bottleneck. Ad and product concept testing ran on a 4–6 week agency cycle. The creative team was routinely waiting on research validation before confirming campaign spend; a bottleneck that delayed campaign launches and reduced the team’s ability to iterate based on early signals.

Component 3: Multilingual blind spots. The brand operated across Arabic, French, and English-speaking markets. Traditional research in each language required separate agency engagements, separate recruitment, and separate analysis; tripling the operational overhead of any multi-market study. Cross-market comparison was slow and imprecise.

The total annual research investment of $180,000 was producing three major deliverables per year. The marketing director’s assessment: too expensive per insight, too slow to inform real decisions, and structurally incapable of covering the Arabic and French consumer conversations that represented the brand’s core growth markets.

The Approach: A Phased Transition to AI-Powered Research

The transition did not happen in a single switch. It followed a deliberate three-phase process that protected against data quality risk while progressively shifting the research mix toward AI.

Phase 1 (Months 1–2): Parallel running and baseline calibration

Before replacing any existing research, the team ran AI social listening alongside the existing brand tracker for one full cycle. BuzzPulse-in-Q monitored brand mentions, competitor activity, and category conversations across Arabic, French, and English social media, news, and review platforms simultaneously. The output of that parallel period served two purposes: it validated that AI-detected sentiment trends aligned directionally with the survey findings that arrived eight weeks later, and it established the brand’s baseline signal across each language market.

The calibration finding was significant: BuzzPulse-in-Q identified a shift in consumer sentiment around one of the brand’s personal care products approximately five weeks before the traditional tracker reported the same trend. That lead time; five weeks of advance signal, was the business case for the transition in a single data point.

see BuzzPulse-in-Q results in a real case study

Phase 2 (Months 3–6): Replacing the brand tracker with continuous monitoring

The semi-annual brand tracker was retired and replaced with continuous AI-powered brand monitoring. Instead of two deep-dive surveys per year, the marketing team received weekly brand health reports automatically generated from BuzzPulse-in-Q data; covering sentiment by market, by product category, by language, and by competitor. Monthly synthesis sessions with the research team replaced the biannual agency presentations.

The shift produced an immediate operational change: brand health monitoring moved from a project-based activity that consumed six weeks of team attention twice per year to an always-on signal that surfaced relevant changes automatically and required human attention only when anomalies appeared.

Phase 3 (Months 7–12): AI-powered concept testing and consumer research

The final phase replaced ad concept testing with Converse-in-Q’s adaptive conversational research. Instead of submitting creative concepts to a 4–6 week agency testing cycle, the team ran AI-moderated consumer conversations across target segments in Morocco, Tunisia, and the UAE receiving structured qualitative and quantitative findings within 72 hours.

This phase also introduced AI-powered competitive intelligence: automated monitoring of competitor product launches, pricing changes, and campaign activity across all three markets, replacing the manual competitive analysis that had previously required periodic agency engagement.

The Results: Before and After

| Metric | Traditional Research Stack | AI-Powered Research Stack |

| Annual research cost | $180,000 | $71,000 |

| Cost reduction | — | 61% |

| Brand health insight frequency | 2x per year | Continuous (weekly reports) |

| Time from question to insight | 6–10 weeks | 24–72 hours |

| Concept testing cycle | 4–6 weeks | 72 hours |

| Language coverage | 3 separate engagements | Simultaneous EN/FR/AR |

| Competitive monitoring | Periodic (ad hoc) | Real-time, automated |

| Early warning capability | None | 4–6 weeks ahead of survey signal |

| Team hours on research admin | ~180 hrs/year | ~45 hrs/year |

The headline number; 61% cost reduction reflects the replacement of agency fees with subscription-based AI platform access. But the more strategically significant outcomes were the ones that do not appear directly in a cost line.

The early warning advantage proved its value within the first six months. BuzzPulse-in-Q flagged a rising negative sentiment pattern around product packaging in the UAE market approximately four weeks before it would have appeared in a scheduled tracker. The brand responded by accelerating a packaging redesign that was already in development, repositioning the launch as a proactive quality commitment rather than a reactive fix. The competitive optics of that timing shift were significant.

Concept testing speed transformed campaign planning. With 72-hour concept test turnaround replacing 6-week cycles, the creative team ran validation on four times as many creative concepts in the same calendar period, testing iterations rather than just selecting from initial options. Campaign performance in the second half of the implementation year improved by an estimated 28% on key engagement metrics compared to the prior year, a result the marketing director attributed primarily to higher-quality creative validation upstream.

Multilingual research parity was achieved for the first time. For the first time in the brand’s history, Arabic, French, and English consumer sentiment were tracked with equivalent depth and speed, from a single platform, without separate agency engagements. The cross-market comparison capability; seeing how the same campaign message landed differently in Casablanca versus Dubai versus Tunis; produced strategic insights that the previous research structure could not generate at all.

What Worked: and What Required Adjustment

What worked immediately:

Continuous social listening and brand monitoring delivered value from day one. The always-on nature of AI monitoring; surfacing signals 24 hours a day without additional cost, produced a research rhythm that felt fundamentally different from the project-based cycle it replaced. The marketing team described it as the difference between checking the weather once a week versus having a live weather feed.

Multilingual coverage was the most immediate qualitative win. The simultaneous monitoring of Arabic, French, and English consumer conversations, with language-appropriate sentiment analysis, addressed a research gap that had been structurally present for years.

What required calibration:

AI sentiment analysis on Arabic dialectal text required a 6–8 week calibration period before achieving reliable accuracy on regional Arabic variants; Moroccan Darija, Gulf Arabic, and Tunisian Arabic differ significantly from Modern Standard Arabic, and the platform required adaptation to the brand’s specific category vocabulary in each dialect. This is an important detail for any brand operating across Arabic-speaking markets: out-of-the-box Arabic sentiment analysis should be calibrated on regional dialect data before being used for strategic decisions.

Concept testing via AI-moderated conversations produced stronger results for explicit attitude measurement; which messaging resonates, which visual approach registers higher interest than for the emotional depth of motivation that human focus group moderation is better suited to uncover. The team maintained one annual qualitative research session with real consumers for strategic brand questions where motivational depth was required, and used AI-moderated research for the iterative concept testing that represented the majority of their research volume.

The lesson: AI market research excels at volume, speed, and continuous monitoring. It requires calibration for specialized language contexts. It complements rather than fully replaces deep qualitative human research for strategic brand questions.

what AI market research looks like in practice

Reinventing-marketing-workflows-with-agentic-ai

The Implementation Roadmap: How to Replicate This

For US businesses and global brands considering a similar transition, the implementation sequence that produced these results follows a clear pattern.

Start with parallel running, not replacement. Run AI monitoring alongside your existing research for one full cycle before retiring anything. The calibration period builds confidence, catches platform limitations, and produces the data point, the comparison between AI-detected signal and traditional research findings, that justifies the investment to stakeholders.

Replace continuous monitoring first. The easiest and highest-ROI first step is replacing periodic brand health tracking with continuous AI social listening. This produces immediate cost savings, immediate speed improvement, and immediate multi-market coverage, without touching the primary research workflows that require more careful transition.

Build the data quality layer before scaling. For any specialized language or category context, invest in platform calibration before relying on outputs for strategic decisions. The six-week calibration investment for Arabic dialect sentiment analysis was the most important quality control step in this implementation.

Retain traditional research for decisions where it is irreplaceable. Deep qualitative insight, validated primary data for major strategic decisions, and regulatory-grade research for specific categories still require human-led traditional methods. The goal is not to eliminate traditional research, it is to reallocate it to the decisions that genuinely require it, and replace the volume of recurring research activity that AI handles more efficiently.

Read more About how AI is changing consumer research in 2026

How AI Is Transforming Research Across MENA Brand in 2026

The MENA market presents specific challenges that make AI-powered research particularly valuable. Language fragmentation; Arabic dialects, French, and English often coexisting within a single market, has historically made multi-market consumer research disproportionately expensive in the region. A brand operating in Morocco, the Gulf, and Egypt previously needed three separate research programs to achieve equivalent market coverage.

AI platforms with native multilingual NLP eliminate that structural cost premium. For MENA-focused brands, AI market research is not just a speed and cost improvement, it is the first time that Arabic-language consumer insight can be generated at the same speed and scale as English-language research. That parity represents a genuine research infrastructure advance that traditional methods could not achieve at any price point.

H-in-Q.com‘s BuzzPulse-in-Q was built specifically for this context: multilingual brand intelligence covering English, French, Spanish, and Arabic simultaneously, designed for organizations operating across MENA and beyond where single-language tools leave critical markets unmonitored. The results described in this case study reflect what is achievable when the right research infrastructure is matched to the right market context.

See how AI vs traditional market research compares on cost and speed

Explore the 7 best AI market research tools for 2026

Frequently Asked Questions: Mena Brand AI Market Research Results

How much can AI reduce market research costs?

AI market research typically reduces costs by 50–60% compared to traditional agency-led studies. The reduction comes from replacing per-study agency fees with subscription-based AI platform access, eliminating manual analysis labor, and consolidating multi-market research into a single platform. SMB marketers using AI save an average of $5,000 per month in operational research costs, according to InsightMark Research benchmarks.

How long does AI market research take compared to traditional surveys?

Traditional studies take 4–12 weeks from commission to final report. AI-powered social listening and brand monitoring delivers continuous real-time signals. AI-moderated concept testing delivers structured findings in 72 hours. AI survey platforms with automated analysis deliver quantitative research results in 3–5 days. The speed advantage compounds over time: brands running AI research make 4x as many insight-backed decisions in the same period.

Is AI market research reliable enough for strategic decisions?

AI market research is reliable for the workflows it is designed for: sentiment trend detection, competitive monitoring, brand health tracking, and concept testing at scale. It requires calibration for specialized language contexts like Arabic dialects and niche industry vocabulary. For the highest-stakes strategic decisions; major brand repositioning, market entry, or product launch validation, best practice combines AI research for continuous signal with targeted traditional primary research for final validation.

What is the biggest challenge when implementing AI market research?

The most common implementation challenge is calibration time for specialized language or category contexts. Out-of-the-box AI sentiment analysis achieves strong accuracy on standard English text but requires 4–8 weeks of calibration on regional dialects, technical vocabulary, or niche category language before producing reliable strategic outputs. Brands that skip calibration often conclude AI research is less accurate than it actually is, the limitation is setup, not the technology.

What research workflows should be kept traditional even after adopting AI?

Two research workflows consistently produce better results with traditional human-led methods: deep qualitative research exploring emotional motivations and unconscious consumer psychology, and primary data validation for regulatory or statistically significant decision thresholds. AI replaces the volume of routine tracking, monitoring, and concept testing, but human-moderated depth interviews and validated statistical surveys remain the standard for strategic questions where the cost of being wrong is high.

How does AI market research work for multilingual markets like MENA?

AI platforms with native multilingual NLP monitor Arabic, French, English, and other languages simultaneously from a single platform, eliminating the need for separate regional research engagements. For Arabic specifically, regional dialect calibration is required: Modern Standard Arabic models perform poorly on Moroccan Darija, Gulf Arabic, or Levantine dialects without adaptation. Platforms like BuzzPulse-in-Q are built for this multi-dialect context, achieving reliable sentiment accuracy across MENA Arabic variants after the initial calibration period.

Conclusion

The numbers from this case study are consistent with benchmarks across the industry: 61% cost reduction, brand health monitoring frequency moving from twice per year to continuous, concept testing compressing from six weeks to 72 hours, and multilingual research parity achieved for the first time across three language markets.

The more durable lesson is strategic: AI market research does not just make existing research cheaper and faster. It enables a fundamentally different research model, one where insight is continuous rather than periodic, where early warning replaces post-hoc reaction, and where the language and market coverage gaps that fragmented MENA research for decades can finally be closed from a single platform.

The implementation requires calibration investment, particularly for specialized language contexts. It requires retaining traditional research for the strategic decisions that genuinely need it. And it requires the organizational discipline to act on continuous signals rather than waiting for the quarterly or annual report to arrive.

For brands willing to invest in that transition, the ROI evidence is consistent and clear.

Ready to see what this looks like for your brand and markets? H-in-Q.com builds custom AI market research infrastructure for brands operating across MENA, North Africa, and multilingual global markets. book your free strategy call →